Trickle Down Metaphysics: From Nietzsche to Trump

Sometime in late 1887 or early 1888, the philosopher Friedrich Nietzsche – unread, unwell, and practically unknown at the time– had an insight that, as far as he could see, would determine the history of Europe, and by default, that of the world, for the next two hundred years. “What I relate,” he wrote in his notebooks (which, after his collapse into madness in 1889 would tragically fall into the hands of his anti-Semitic, Aryan supremist sister) “is the history of the next two centuries.” “I describe what is coming,” he continued, and added ominously “what can no longer come differently…”[1]

What was it that was on its way and whose advance could not be halted? It was, Nietzsche tells us, “The advent of nihilism.”[2] What exactly nihilism is we will get to shortly. Right now I want to focus on Nietzsche’s philosophical premonition and his sense that what he saw and what he had to say about it, would not be understood by his contemporaries, let alone welcomed by them, but could, with any luck, reach the ears of a later generation. What Trump has to do with this must wait until the punchline.

An Untimely Man

Nietzsche always considered himself a man out of time – in more ways than one. One of his earliest works was entitled Thoughts Out of Season, or, as another translation has it, Untimely Thoughts. Readers of his last works, such as The Antichrist, not published until after his final breakdown, can detect the urgency with which he presented the first – and in the end, only – book of what he had intended to be – but never managed to make – his magnum opus, what he called the Revaluation of All Values.

The original title of this never completed masterwork, The Will to Power, was adopted by his sister and used by her when she presented the large collection of notes Nietzsche left behind after his collapse in Turin as the dismembered masterpiece it never was. This non-book, brilliant as anything Nietzsche ever wrote but not in any way on a par with Thus Spoke Zarathustra or Twilight of the Idols, passed through many hands and reached a reading public in many forms, including the insalubrious shape given it by Nazi hacks, courtesy of his Hitler-loving sister.[3] Did Nietzsche know that his days of sanity were numbered, and that he would not be able to fuse together the disjointed jottings making up The Will to Power into a solid systematic articulation of his thought? Did the sense that time was running out compel him to pull out all the rhetorical stops and put everything he had into the manic burst of creative energy that produced not only The Antichrist, but his last dig at his ex-hero Wagner and what must go down as the strangest autobiography ever written, Ecce Homo? His protestations in this daimonically divine attempt to recount “how one becomes who one is,” that he not be confounded “with what I am not!” suggest as much.[4] The tragedy, as every reader of Nietzsche knows, is that this is exactly what happened to him, in more ways than one.

But even as his sanity was heading toward its sunset, Nietzsche saw himself as ahead of his time. He did not write for today, nor even for tomorrow. As he says in the foreword to The Antichrist – which is as compact a display of Nietzsche’s rhetorical pyrotechnics as we could wish – “Only the day after tomorrow belongs to me.” “Some are born posthumously,” he tells us, and he hopes that his readers may be too; he doubts, understandably enough, if they are even living yet.[5]

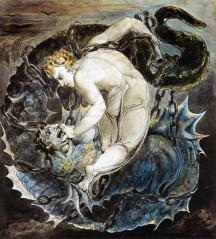

This sense of being ahead of his time led Nietzsche to remark that he can relate his prognosis for the next two centuries because he has already lost his way in “every labyrinth of the future. ” He tells us he is a “soothsayer bird spirit who looks back when relating what will come.”[6] He not only sees its irrevocable approach, he has experienced it in advance and has come out the other side. He can tell us what is on its way, what it will mean, and what we can do about it, because he has already gone through it. Like a shaman, Nietzsche is the wounded healer who has had the illness we will all shortly contract, and he is here to tell us how we can not only survive it, but may indeed be made more healthy because of it.

Yet Nietzsche can only hope that his readers, his real readers, will arrive at some future point and look back to his writings in order to understand their present. They certainly weren’t many of them around when he was writing. He knew he wasn’t writing for “those for whom there are ears listening today.”[7] His untimeliness, it seems, is inescapable. When Zarathustra comes down from the mountain top to spread the message of the Overman, the townspeople laugh at him.[8] “They do not understand me,” Zarathustra laments. “I am not the mouth for these ears.”[9]

Nietzsche knew this would be the case. In The Gay Science, written just before Zarathustra, he announces for the first time the revelation that is at the heart of Zarathustra’s message, that “God is dead.” Yet the madman who announces this is greeted with the same laughter that meets Zarathustra’s equally portentous proclamations. “I have come too early,” the madman reflects, “my time is not yet.” Although the deed is done its reality has not yet reached the people, even though it was they themselves who committed this theocide.[10] “This tremendous event,” Nietzsche’s madman reflects, “is still on its way.” “Deeds, though done, still require time to be seen and heard.”[11]

Nietzsche wrote these words in 1882. As I write, 2020, a turbulent year, is heading toward its last season. Almost a century and a half have passed since Nietzsche’s madman entered the marketplace with his lantern lit in the bright morning sun. Close enough, perhaps, to Nietzsche’s “next two centuries” for whatever is on its way to show clear signs of its arrival?

Nihilism

The word “nihilism” was coined by the Russian novelist Ivan Turgenev and first appears in his novel Fathers and Sons, published in 1862. The Latin nihil means “nothing” and so nihilism is the belief in nothing. Whether this means a lack of belief in anything or an active belief in nothing remains debatable. The historian Jacques Barzun, distinguishing the difference between nihilism and anarchism, with which it is often confused, remarked that a “real nihilist believes in nothing and does nothing about it.”[12] The anarchist shares a lack of belief in the same things that the nihilist rejects, but unlike his less motivated cousin, he certainly wants to do something about it. In Turgenev’s time, anarchists – those who believed in no government - threw bombs at kings and politicians; they were the terrorists of their day. A nihilist in Barzun’s sense would never have bothered with such pointless exertions, and would have dismissed the anarchist’s apolitical idealism as just another illusion.

For Turgenev nihilism had a political and social context. As the title of his novel suggests, this had to do with the inter-generational conflict between the romantics of the 1840s (the fathers) and the “New Men” (sons) of the 1860s.[13] Bazarov, Turgenev’s protagonist, rejects the idealism of the previous generation and denies the reality of any values other than those apprehended by science – which in effect means any value at all, given that aside from practical and utilitarian ones, which can be quantified and measured, science recognises that values, in the idealist sense, do not exist. This “faith” in only what can be known “positively” - that is, quantifiably - would ironically be christened “positivism,” and became associated with the ideas of the founder of sociology, Auguste Comte. In The Devils, published a decade after Fathers and Sons, Dostoyevsky dramatized the consequences of the nihilism of the New Men when their ideas are put into action. By the end of the novel, there are bodies strewn left and right and a town is in flames, all in the cause of the positive “progressive” ideas of the New Men in town.

Values Old and New

Nietzsche knew of Russian nihilism; he was a reader of Dostoyevsky. But his notion of nihilism was more encompassing than Turgenev’s and did not allow for the religious or spiritual response to it that Dostoyevsky explored in his last novel, The Brothers Karamazov. Nietzsche was aware of the dangers involved in the notion that, if nothing is “true,” in the old, idealist sense of Truth, then everything is “permitted,” and which Dostoyevsky explored in Crime and Punishment. But Nietzsche also saw this terrible “truth” as an opportunity for the creation of new values.

Why were new values needed? Because, as the nihilists believed, the old ones were no longer credible. But Nietzsche disagreed with the nihilists that all values were hollow. Hence his attempt at a “revaluation of all values.” To put it simply, just because the values that had hitherto informed and motivated western civilization were no longer tenable – as Nietzsche believed was the case – this did not mean that we could not create new values to help us past the catastrophe that he saw was unavoidable. Ultimately, for Nietzsche, nihilism can have a positive effect, in that it can clear the ground of outmoded ideas and create a space for a fresh start. There are, however, no guarantees.

The Uncanny Guest

Marx had warned that a spectre was haunting Europe. For Nietzsche, that wraith, communism, was only a party crasher. The true spirit knocking at the door was nihilism. “Whence comes this uncanniest of all guests?” Nietzsche asks. He has arrived, Nietzsche says, because “the values we have had hitherto thus draw their final consequence; because nihilism represents the ultimate logical conclusion of our great values and ideals…”[14]

In a nutshell, Nietzsche is saying that the very pursuit of truth, both in the religious and scientific sense, which the west has held as the acme of perfection, and the obligation to honesty that compels us to obey it, have arrived at the paradoxical truth that there is no “truth” in the sense of some “objective” reality that our intellectual and spiritual integrity demands we acknowledge.

As Nietzsche did, we can see Plato as the source of this pursuit of truth, as his philosophy informed both the Christianity that embodied the spiritual “hunger for truth” and the later science that sought for the physical truth about the universe through mathematics. Nietzsche is saying that this highest value has undermined itself. Our very honesty compels us to recognise that the aim of reaching the goal of Truth has led us to the truth that the goal does not exist, at least not in the sense that we had believed it did. There is no “higher world,” either in a Platonic sense of ideal forms, whose shadow is the world of the senses, or in the Christian form of a loving God who provides meaning to our lives here below.

I should point out that Nietzsche accepted the godless, meaningless universe that the science of his time was actively introducing to western consciousness, and which the science of our own time continues to promote as a “true” vision of things. As the astrophysicist Steven Weinberg remarked, “the more the universe seems comprehensible, the more it also seems pointless.” This assessment is shared by the majority of his colleagues. Nietzsche agreed that the universe was meaningless, but he believed that our lives didn’t have to be.

Yet even the ‘truth’ of science, which has pulled the carpet out from under any “higher truths,” is not immune from the ‘devaluation’ Nietzsche detects. Science bases itself on “facts,” the kind of measurable, quantifiable knowledge that informed positivism and the New Men. Yet Nietzsche insists that “there are no facts.” What science takes as facts are interpretations. They may have practical value, meaning they work, but ultimately they are really “a kind of error without which a certain type of animal finds it impossible to live.”[15] As the philosopher Bergson, Nietzsche’s younger contemporary, would argue, the intellect is an organ in the service of life.[16] The job of the intellect, Bergson argued, is to scan the world and reduce its complexity to a highly edited picture that enables us to survive and act in it. He would have agreed with Nietzsche that in the case of facts, “the value for life is ultimately decisive.”[17] The “truth” that science “reveals” does not tell us what the world is “really” like; it is an interpretation that allows us to manipulate the world to our best advantage. The “facts” that science celebrates as the “truth” about the world are really very useful falsifications, informed with the aim of reducing the world’s reality to that amount of it we can make use of.

We may ask whether “the truth that there is no truth” is an insight hoist by its own petard. For if it is true, then there must be, after all, some kind of truth that it shares in, and so it refutes itself. And if it is not true – as it must be if there is no truth – then there is no reason to pay attention to it. But for the moment, let’s let these logical snags lie.

Forgetfulness of Being

Someone who took Nietzsche’s announcement of the advent of nihilism very seriously was Martin Heidegger, along with Ludwig Wittgenstein, probably the most influential philosopher of the twentieth century. Heidegger agreed with Nietzsche that Plato was the source of the problem, but his response to this was rather different than Nietzsche’s. Whatever we may think of his ideas – and Nietzsche wanted nothing more than that we should think our way through them – we have to admit that Nietzsche is one of, if not the most readable of philosophers. Not many are. Bergson, whom we’ve mentioned, is one. Schopenhauer and Kierkegaard are too. But most philosophers are not page turners and the worst offenders in this regard are German – that is why Nietzsche is such an exception. He even said that he wished he hadn’t written Thus Spoke Zarathustra in German.

Heidegger falls into the unreadable philosopher camp. Where Nietzsche distils his ideas into their most compact form and often invites his reader to complete the thought, using punctuation in a way that expresses his meaning as much as his words do, Heidegger is often prolixity itself, forcing his reader to proceed at a snail’s pace through his eccentric use of otherwise familiar words and his frequent neologisms, in contrast to Nietzsche’s exhilarating dance. It is a shame that Nietzsche was not around and compos mentis enough to be able to comment on Heidegger’s interpretation of his work – or indeed on that of so many others. One suspects that his great philosophical descendant may have been one of those whom Nietzsche worried would “confound him” with what he “was not,” as so many did. Because, from what we can take as Nietzsche’s point of view, this is exactly what Heidegger did.

One may be excused for wondering if “the secret king of thought,” as Heidegger’s student and mistress Hannah Arendt called him, was doing his best to out philosophise Nietzsche, as Hegel, another difficult German thinker, had out philosophised all philosophy before him.[18] Some Nietzsche scholars, such as Michael Tanner, have taken Heidegger to task for taking “the view that the ‘real’ Nietzsche is to be found in the notebooks,” a view, we’ve seen, that was begun and promoted by Nietzsche’s odious sister.[19] This, Tanner argues, allowed Heidegger “to peddle his own philosophy as deriving from and also critical of Nietzsche,” which is exactly what Heidegger does.[20]

Where Nietzsche believes that he has seen through the falsity of metaphysics, which we can understand as rational speculation on the character of a “higher,” “beyond,” or “transphysical” world, as the Greek prefix “meta” indicates, Heidegger one ups him by including Nietzsche’s notion of “the will to power” as the last expression of the metaphysics Nietzsche wanted to undermine. Nietzsche believed he had escaped from the limits and constraints of metaphysical thinking and that the new values he foresaw would provide men and women in the “post-metaphysical world” with inspiration to create a new vision of human existence. According to Heidegger, Nietzsche had fooled himself, and was blind to the fact – which Heidegger, of course, saw very clearly – that what he in fact had done was to bring western metaphysics to its destined conclusion.

Heidegger spells this out in his essay “The Word of Nietzsche: ‘God is Dead.’”[21] Briefly put, for Heidegger, Nietzsche’s notion that “the will to power,” which he posits as the driving force behind life - an idea that influenced, among others, Alfred Adler and Adolf Hitler - proceeds by creating values, keeps it within the purely human realm and maintains the perception of the world as ready for our use. For Heidegger, this means that Nietzsche, like all the philosophers before him, remains blind or inattentive to what Heidegger considers the fundamental concern of thought: the question of being.

A Wrong Turn at Plato

Heidegger agreed with Nietzsche that the road the west had taken since Socrates had led to the uncanny guest at our door – or, as I hope to shortly show, sitting in our living room. But where Nietzsche saw the loss of instinct and contact with the vital powers of life through the rise of Socratic rationalism, Heidegger saw something that he felt was more fundamental: loss of contact with being itself. What is being? That is a good question and one that Heidegger believed the west had lost sight of when Socratic reason ousted the early mythopoetic philosophising of the pre-Socratics from pride of place.[22]

We become aware of being when the fact of our own existence, or that of anything else, strikes us as surprising. This is not a definition of being – it defies that – but a way of recognising when we are remembering it. Because for Heidegger, most of the time we do not remember it; we suffer from what he calls “forgetfulness of being.” This was a diagnosis of modern humanity that he shared with the esoteric teacher Gurdjieff, who, I must say, can at times be as unreadable as Heidegger; see his monumental masterwork of digression, parenthetical remarks, and dependent clauses, Beelzebub’s Tales to His Grandson.[23] Gurdjieff also shared with Heidegger the belief that the one sure-fire method of dissipating our forgetfulness of being was to achieve and maintain a vivid awareness of the reality of our death. We can say that both saw the virtue in Dr Johnson’s remark, often quoted by Colin Wilson, that “the thought that one will be hanged in a fortnight concentrates the mind wonderfully.” When the mind is thus concentrated, we are no longer forgetful of our being.

Heidegger believed that our forgetfulness began when Being – he capitalises the fundamental fact of existence to distinguish it from the plurality of existing things, i.e. “beings” – was lost sight of by Plato and the philosophers that followed him. The pre-Socratic philosophers – Parmenides, Heraclitus, Empedocles – all experienced a kind of primal awe in the face of existence. Their response to it was a sense of wonder, of astonishment, which informed their mythopoetic attempts to capture some sense of the sheer strangeness of being. We can say that they were fascinated by the question “Why is there something rather than nothing?” that later philosophers, such as William James, would also ask and which curious children still do, to the dismay of befuddled parents. Socrates’ rationalism, turned into a philosophical system by Plato, put this question aside and sought rational explanations for the world. This, Heidegger argued, started the process of gaining rational control of the world – technology - the first step in the “destining of being” that led, according to Heidegger, to Nietzsche’s will to power, the end of metaphysics, and the advent of nihilism.

Inauthentic Being

Heidegger had an enormous influence on twentieth century philosophy, and he continues to be a powerful influence today. At the risk of simplification, for brevity’s sake, we can say that his influence can be seen in two different currents, flowing from his thought. The first was existentialism. Kierkegaard, Dostoyevsky, and Nietzsche are generally seen as the ‘fathers’ of existentialism, but Heidegger put it firmly on the philosophical and academic map, although, to be sure, Heidegger denied he was an existentialist. The second current, which we will get to shortly, was deconstructionism, and its fellow traveller, postmodernism.

Existentialism is most popularly associated with the French philosopher and novelist Jean-Paul Sartre and came to wide public attention in the years following the end of WWII. The existentialists of la rive gauche – Sartre, Simone De Beauvoir, Albert Camus and their many hangers-on – were a kind of sophisticated anticipation of the Beat Generation of the 1950s, who took up some of their attitudes (among them, promiscuity, heavy drinking, and black turtle necks) and gave them an American twist, although, to be sure, the existentialists were of a much more intellectual stamp than Kerouac, Ginsberg et al. The existentialists accepted the idea that the values of the pre-war period were hollow. Human beings lived in a meaningless, “absurd” world and the people who refused to recognize this – whom Sartre called “salauds,” “bastards” in French – were guilty of what he called “mauvaise foi,” “bad faith,” and lived “inauthentically.” That is, they wallowed in “forgetfulness of being” and accepted the false, but comforting world of human values, consciously ignoring the insight – known to Sartre for some time – that their lives were “contingent,” that is, unnecessary.

The essence of existentialism can be summed up in Sartre’s famous pronouncement that in human beings “existence precedes essence.” This means that, unlike a chair or a computer, we exist before we know why we do. A chair exists because someone made it to perform a function, likewise a computer. What is our function? According to Sartre and Co, we have none. There is no reason for our existence. We are “condemned to be free,” meaning that we have to create our own meaning, something Nietzsche had pointed out half a century earlier. Those who refuse to face this frequently depressing challenge embrace “inauthentic being,” which is a kind of cowardice in the face of our own inexplicable existence. For some, Sartre himself expressed a good deal of bad faith when he tried to wed existentialism, with its emphasis on the responsibility of the individual to make use of his freedom, to accept the burden of choice, with Marxism, which cares nothing about the individual and his freedom, in his Critique of Dialectical Reason.[24] In Sartre’s favour it may be said that his embrace of Marxism was motivated more by his hatred of the bourgeoise – salauds all – than his appreciation of dialectical materialism.

Dismantling Western Metaphysics

The other current flowing out of Heidegger’s dark thought, deconstructionism, took a different route. Where Sartre focussed on Heidegger’s phenomenological analysis of human existence, presented in his truncated masterwork Being and Time – Sartre one upped him with his own Being and Nothingness – the deconstructionists who came after Sartre concentrated on a different aspect of Heidegger’s thought.

What was needed in order to mitigate the effects of the destining of Being toward nihilism, Heidegger believed, was to go back to the beginning of western philosophy and dismantle it. Heidegger’s one time teacher, Edmund Husserl, the founder of phenomenology – from out of which existentialism sprang - took as his philosophical battle cry “To the things themselves!” In essence this meant forgetting about everything that philosophy had so far believed it had learned about the world and attempting to approach it without presuppositions, to forego trying to explain reality and simply try to describe it. It was this strategy that led to Heidegger’s “fundamental ontology,” ontology being the study of Being.

In Heidegger’s case it was not back to the things themselves, but back to Anaximander, Heraclitus, Parmenides and the other pre-Socratic “thinkers” – not “philosophers,” an important distinction for Heidegger – who were not infected by the Socratic fascination with reason.[25] If we were to remember Being, we had to return to when our amnesia set in, and try to catch the forgetfulness before it established itself as a particularly pernicious habit.

To this end Heidegger spoke of what he called “the destruction of metaphysics” or “the destruction of the history of ontology,” the taking apart of the whole edifice of western philosophy, its slow and painstaking dismantling.[26] This was to be the focus of the projected second part of Being and Time, which Heidegger eventually abandoned, perhaps recognising that producing another obscure weighty tome would add more to the very edifice he wanted to take down. In later years he wrote essays on language, art, poetry, technology and exchanged the polarity of Being and Time for that of “lighting” and “presence.” Lichtung, lighting or, as it is sometimes translated, “opening,” is the space in which the presence – Anwesenheit – of Being can appear. Truth for Heidegger is alētheia, “unconcealment,” a revealing of the “things themselves,” and not how they appear when we see them as “useful”.[27]

This was the point of the destruction of the history of ontology: to open the doors of western philosophy’s perception, to restore what the poet Gottfried Benn called “primal vision,” to achieve the radical astonishment in the face of Being that the earliest thinkers experienced, and to encounter its presence, directly, unmediated, without the carapace of millennia of concepts and suppositions.[28]

Deconstruction Sets

Someone who picked up on this aspect of Heidegger’s thought was the French philosopher Jacques Derrida, the most well-known proponent of the philosophical and literary movement known as deconstructionism. This got its start in the 1960s - as did postmodernism, with which it is generally allied. In little more than a decade, the two would pretty much conquer the academic world, especially in the United States, were academics are routinely cowed by anything coming over from Europe. Derrida was also heavily influenced by Nietzsche.

The name “deconstructionism” alone should give us an idea of what it is about. Like Heidegger, Derrida wants to dismantle western philosophy, and like Nietzsche he agrees that the pursuit of truth that has engaged philosophy and other disciplines for centuries, is chimerical. But Derrida goes further than both in undermining the notion that philosophy at any time was a conduit through which the truth about reality could ever reach human consciousness.

Heidegger began his destruction of metaphysics by abandoning his commitment to Husserl’s approach to philosophy. Husserl would have rejected Nietzsche’s contention that the pursuit of truth unwittingly undermines itself, although, to be sure, in his last days he was dismayed by the kind of reductive tact science had taken – a result of the “positivism” which today is known as “scientism” - and spelled out his concerns in his last, unfinished work The Crisis of European Sciences and Transcendental Phenomenology, published in 1936, two years before his death. But fundamentally Husserl believed that the aim of philosophy is to understand the universe and to arrive at truth, and he believed his phenomenological method was a means of doing that. Yes, an enormous amount of presuppositions and assumptions about reality has obscured our view, but we can clean our doors of perception through phenomenology and see clearly. Heidegger broke with Husserl because he believed he retained too much of the idealism of traditional philosophy, the very metaphysics that first Nietzsche and then Heidegger wanted to overcome.

Yet even though Heidegger rejected Husserl’s belief in phenomenology’s ability to arrive at truth, free of our assumptions about it, he still retained the belief in what he called “presence,” which, as we’ve seen, was the name he gave Being in his later work. This “presence,” however, was not uncovered by Husserl’s approach, but by a kind of “listening” that, in many ways, seems very close to a kind of mystical contemplation; Heidegger even uses the term Gelassenheit, which means a kind of “letting go,” and is associated with the thirteenth century German theologian Meister Eckhart.[29] In a nutshell this means that if we let things “be” – that is, if we do not see them as there only for our use - they will “speak” to us. This is also why so much of Heidegger’s later writing is focused on poetry. Poets, like the pre-Socratic philosophers, do not try to explain the world, but to respond to it. For Heidegger, the language of poetry comes closer to presenting – “presence-ing,” if I’m allowed a Heideggerian coinage – the world than that of philosophical analysis. Language, for Heidegger, is the house of Being, and poets are its builders.

The Absence of Presence

Derrida starts with Husserl too, but he goes further than Heidegger in denying even that phenomenological apostate’s positing of “presence.” There is no presence in the world, Derrida and his many epigone tell us, only an absence, or, at best, a différance that, according to him, makes all the difference. We can say that where the existentialists who followed Heidegger were concerned with the “inauthenticity” that comes with “forgetfulness of being,” the deconstructionists, and their postmodern fellow travellers, decided it was best to forget about Being altogether. It and its more poetic repackaging as “presence” is simply the latest illusory object to occupy the ever muddled minds of philosophers. The pursuit of Being and the letting-be of Presence is a hunt for a will o’ the wisp.

Derrida arrives at this conclusion through a consideration of language. If Heidegger believed that language is the house of Being, Derrida wants to show that this house simply does not exist, and that at best language is more like an itinerant wanderer, pitching a tent here and there and not staying in the same place for any length of time. Two central sources for this view are the work of the Swiss linguist Ferdinand de Saussure and an early essay by Nietzsche, “On Truth and Falsehood in an Extra-Moral Sense.”

Saussure’s basic insight -if indeed it is one – is that language functions through difference, that is, the meaning of words is rooted not in the things they appear to name, but in the differences between words themselves. This is the source of Derrida’s différance. Things are the “signified” and words are their “signifiers,” but the meaning words appear to have does not depend on the qualities and characteristics of the signified but on the context of all other signifiers. Language is an arbitrary system of signs, and the words we use to describe the world could all be completely different and still serve this function as long as their users all agreed on the conventions of the system. We could all call blue “green” and vice versa, and as long as we all stuck to this, it would make no difference. This, of course, is a very different view of language from that of some mystical accounts of it, such as the Jewish tradition of Kabbala, which sees language as, not only the house of Being, but containing the very energies at work in the creation of the world – at least the Hebrew alphabet is so endowed. I also suspect that no true poet would consider the language that he uses to reveal the mystery of things as being nothing more than an arbitrary system of conventional signs. Yet Derrida via Saussure assures us it is.

Deconstructionism maintains that the necessity for context in order for signifiers to actually signify – for them to work - reveals a fundamental ambiguity in language. We know the same word can mean different things in different contexts, and how easy it is for us to misunderstand each other because of this. ( “That is not what I meant.” “Oh, really?”) This was the aspect of deconstructionism that spread like wild fire in the literary criticism departments: the idea that the author is the least person to know what his work is actually about, and that the job of the deconstructionist critic, was to find the loose thread – the aporia – in a text and pull it, so that its apparent meaning unravelled. Soon literary criticism professors were showing how creatively they could unravel any number of classics, mostly by the Dead White European Males who were coming under attack from other quarters as well. That none of these critics or their fellow travellers produced any classics of their own that their colleagues could unravel has perhaps understandably rarely been mentioned. As is the fact that the ambiguities of language were well known by many writers, poets, and philosophers before them.

The conventional view of language was also expressed in Nietzsche’s early mediation on the essential metaphoric character of words. In essence, a metaphor stands for something else; it is a pictorial way of describing the world, it presents an image, and hence, is closer to poetry than to prose although, to be sure, our prose is shot through with metaphors, most of which we do not recognise as such. And this, in fact, is Nietzsche’s argument. I say a pretty woman’s face “bloomed” and that a man “burned” with anger. An extremely literal minded person would ask to see the petals and ash. We do not even think of this because we are no longer surprised by the correspondence between the image and the beauty and anger to which we want to draw attention. These metaphors have become conventions, just as “water under the bridge” and “leaving no stone unturned” are. We no longer recognise their pictorial character.

This leads Nietzsche to conclude that words are not labels we stick on things, which, by doing so, allows us to “know” them and “explain” them. They are metaphors for the things that in truth – that word again – have no relation to the world other than a practical one, which is the case with all our other falsehoods.[30] Like the “facts” of science, words are necessary and useful falsifications, that aid in our “will to power” over the world. Language enables us to manipulate the world but it does not tell us anything about the world’s reality. Readers of Sartre’s novel Nausea will recall the queasiness that comes to his protagonist at the sight of the root of a tree or of a doorknob in his hand.[31] The words that he had hitherto used to understand the world have slipped off things, rather as if the adhesive fixing them in place had evaporated. The things are now free of our categories, the verbal grid we place over them to, as it were, keep them in place. Their sheer “isness” remains, their brute actuality, shorn of the comforting familiarity language places over them. “I said with the others: the ocean is green, that white speck up there is a seagull…then suddenly existence had unveiled itself.” We can experience something similar if we take a word and repeat it over and over. Soon what happens is that its meaning seems to dissolve and it becomes merely a sound in our mouths. This, in effect, is what Nietzsche is saying words “really” are.

Sartre’s protagonist knows the truth that words are “a referentially unreliable set of almost entirely arbitrary signs, made up by us in order to safeguard life and the species.”[32] Language, for Nietzsche at even this early stage, is a “mobile army of metaphors” and truths are “illusions about which one has forgotten that this is what they are,” rather like coins that have been worn down by use and now “matter only as metal, no longer as coins.”[33] Words, like facts, are for Nietzsche interpretations. There is no compliant objective reality that they refer to and by which we can gauge their accuracy. Hence the deconstructionist dictum that “there is only the text,” and that all texts are open to infinite interpretation. In other words, anything goes.

Postmodernity Ho!

This notion of a lack of presence or “essence” to things is at the heart of postmodernism, although, to be sure, postmodernists themselves would, by definition, deny that postmodernism had a heart, that is, an essence. Indeed, during my brief time as a graduate student in the early 1990s, no greater condemnation could be put upon one than to be called an “essentialist,” for reasons that will be forthcoming. It is this seemingly self-erasing character that makes postmodernism “definition resistant” in the way that some fabrics can be made “water repellent.” Given that more than one commentator has pointed out that defining postmodernism is “a minor academy industry in itself,” I do not propose to add to that work force here.[34] To begin with, modernism, the host onto which its “post” parasite has become firmly attached, is itself open to many interpretations and definitions.

In its simplest sense, by modernism we can understand the general shift from a religious to a scientific view of the world that took hold in the early seventeenth century, although it got its start with Copernicus a century or so earlier. The “cash value,” as William James would say, of this shift was that the human mind, for millennia held in check and stunted by the delusions and superstitions of religion, was now able to discover the truth about the world, through the unfettered activity of what we now know as science. Postmodernism, we can say, at least in this context – its protean character has many applications – got going when it became clear that the promissory notes that modernity had counted on were bouncing at the bank.[35] Of course many along the way knew they would: Goethe, Blake, and, as we’ve seen, Nietzsche were some of them. But the dud checks really started piling up sometime post WWII, when the notion that the “modern world” and the “grand narratives” informing it no longer seemed to provide the kind of security and finality they had promised they would.

Another part of the “postmodern condition” – the title of a book by Jean-François Lyotard that announced the end of “grand narratives” and put postmodernism on the philosophical map – is the idea that the simulation of reality has taken over from the original. We have become a “society of the spectacle, “ in which, as Jean Baudrillard tells us, the representation of reality has usurped that which it represents. Less and less do we experience reality unmediated by some form of representation – the ubiquitous smartphone is the prime example - with the bizarre result that the most popular form of entertainment as we head into the third decade of the twenty-first century is “reality television.” Here, reality, unadorned, unembellished, untouched by artifice and direct from your household to mine, holds captive millions of “viewers” who, in general, suffer from the forgetfulness of “real reality” that troubled Heidegger. There are even reality televisions shows about people who watch reality TV. And in our efforts to enjoy this ersatz reality, we enhance our representations of it with improvements such as “high definition” (HD) and “virtual reality” (VR), while actual everyday reality suffers neglect.[36]

Reality is Up For Grabs

So at the same time that university students in humanities departments have for decades been spoon fed a deconstructive and postmodern diet, on the home front “reality” has been subjected to the same kind of dismantling. Or perhaps in this case substitution is the proper term. From both, however, the fundamental “takeaway” is that reality is malleable. It is up for grabs. We create reality, either on a large scale cultural level, given that, for postmodernism and its fellow travellers, reality is relative to a given culture, that is, it is historically produced; or on the micro-cultural level of television shows. Either way, the notion of a stable, fixed, objective reality, accessible to human perception and amenable to being known, that is real and true for all cultures at all times, has become for many of us “so twentieth century.”

Indeed, for postmodernists and its various allies it has become an object of scorn. “Essentialism,” the notion that, contra deconstruction and postmodernism – and indeed Sartre and some existentialists – things, ourselves included, do have an essence, a nature, that is not historically or culturally produced, is seen as the source of a kind of “metaphysical imperialism,” an expression of the will to power, to dominate. It is an expression of the Eurocentric, “phallogocentric”, dead white male dominated “structure of discourse” that has oppressed all alternative discourses certainly since Plato, or so we are told.

Postmodernism and deconstructionism were here to dismantle this edifice and lead the west in a generally left direction. The irony here is that postmodernism and deconstructionism – both of which can be seen as informed with a kind of Marxism recidivus - have their roots in “men of the right”, not the left.[37] Neither Nietzsche or Heidegger were in any way leftists, although, as Allan Bloom pointed out, that is exactly the sea-change – or distortion - they underwent when deconstructionism and postmodernism took over American campuses, with some help from the Frankfurt School.[38] Derrida was a Marxist, as were others to emerge from “May ’68,” the “almost revolution” that brought Paris to a standstill at the height of that turbulent decade. The slogans that inspired that eruption, “Power to the Imagination,” “Take Your Desires For Reality,” would soon find themselves on the syllabi of literature and philosophy classes a decade or so later.[39] But what the men of ’68, who became the “tenured radicals” of the 70s and 80s, did not know was that their deconstruction of what they saw as an oppressive reality would not lead to the “progressive” society that were aiming at, but to something quite the opposite.

Because if reality is up for grabs, there is no telling who will grab it.

The Party’s Over

The initial effect of this dismantling of truth and reality was a sense of liberation. It was party time in philosophy and literary criticism departments and the students were soon taking it to the streets. Scientists may have shaken their heads – if they were at all aware of it – but they themselves had gone through something similar concerning, to be honest, a more fundamental level of things, with the “quantum revolution” of the early twentieth century. Even so, in the 1970s and 80s, science had embraced its own chaos, in the form of “chaos theory” and then “complexity,” while Paul Feyerabend’s “anarchic” form of science rivalled some of Derrida’s less comprehensible productions for sheer eccentricity. But the shenanigans of elementary particles did not seem to impinge on the social and political world in the way that the radical ideas emerging from humanities departments did.

Yet once the initial celebrations had quieted and the deconstructive dust had settled, alert minds noticed something. The dismantling had cleared a great space and the bricks of what had once occupied it were scattered about, some in neat stacks, some in random piles. That work was done. But nothing seemed to be going up in its place. Some argued that this was as it should be; the “grand narratives” were gone, and it was the time for the more local stories to be heard. But many of these started talking over each other, interrupting each other, arguing or, as often as not, shouting each other down. The process of liberation seemed to have turned to one of disintegration as the hitherto oppressed narratives now competed with each other for attention and dominance. The deconstruction, we could say, was deconstructing the deconstructors.

This should not have been surprising. Neither deconstructionism or postmodernism, in whatever form they take, possess anything positive – in the general sense of the word, not that of “positivism.” They are in essence – unavoidable, I’m afraid – content-less. Postmodernism merely means whatever comes after modernism. And you can’t deconstruct anything from scratch. To take something apart it first has to be built.

At the same time as the postmodern party was turning into a somewhat disheartening morning after, an ambience of general distrust had taken hold of the popular mind. A “hermeneutics of suspicion,” as the philosopher Paul Ricoeur had called it, had settled in, a cynicism that, in its desire not to be taken in, subjected everything to doubt. Yet the popular mind had also acquiesced in a kind of discontented fatalism, convinced that the individual is at the mercy of forces well beyond his control, in the world and in himself, something that both postmodernism and deconstructionism had repeatedly repeated. The individual as such no longer existed; he was merely an empty space in which vague but omnipotent “social forces” operated. Ironically, this suspicion of once trusted sources was allied with a mind so open to a variety of “conspiracy theories” that it was ready to swallow practically any “alternative” account, as long as it contradicted whatever the “official” one was.

Trickle Down Metaphysics

It seemed that, by the second decade of the twentieth century, the uncanniest of guests that Nietzsche saw was on his way, had indeed arrived, perhaps a little ahead of schedule; but after all, we live in accelerated times. The nihilism of the rarefied metaphysical heights of Nietzsche’s mountain top seemed to have flowed down to the lowlands of everyday life, in a process that I call “trickle down metaphysics.”[40] It passed from Nietzsche, who, writing for the day after tomorrow, warned it was on its way, to Heidegger who took it as the starting point of his “deconstruction of the history of ontology.” This project was happily absorbed and eagerly carried on by the deconstructionists and postmodernists, who preached it to students who swallowed it like mother’s milk and who widened the target to include practically all of western culture. Thus began what Jacques Barzun called “the Great Undoing,” the devaluing of the western intellectual and cultural tradition because “Western Civ Has Got to Go.”[41] At the same time, through some strange process of osmosis facilitated by that mysterious entity the Zeitgeist, practically the same ideas were becoming de rigueur in popular culture and consciousness, until reality had become so attenuated that we have to look for it now on television. The representation has taken over from the represented. The simulation has replaced the original.

Enter Trump - Finally

And what does Trump have to do with all this, you ask? Patient reader, I will tell you. He is the simulacra that has replaced the reality, one of the New New Men who make real political use of the idea that reality is up for grabs.[42] He has stepped into the space emptied by deconstructionists and postmodernists and made the transition from reality TV to the Real Thing. He has crossed the ontological checkpoint between false and true while occupying both sides simultaneously. I am sure he has never heard of postmodernism, deconstructionism, nihilism, Nietzsche, Heidegger or anyone else I’ve mentioned. But he embraces the notion that what we call truth is an interpretation, a falsehood designed to help us manipulate the world to our best advantage, and he has run with it.

He was well primed for the job. First there is his apprenticeship as a reality TV star on, aptly enough, a program called The Apprentice, in which he hired and fired and wore the impressive overcoat, as he does today. But even before this, he had absorbed a philosophy of life that had at its basis the belief that reality is what we make it. As I point out in my book Dark Star Rising: Magic and Power in the Age of Trump, Trump’s own apprenticeship was conducted under the tutelage of America’s most positive thinker, the Rev. Norman Vincent Peale, whose sermons Trump attended since childhood and whose book, The Power of Positive Thinking, taught Trump the secret of success. This can be summed up in a dictum that one suspects Trump repeats like a mantra: “Facts don’t matter. Attitudes are more important than facts.”[43] And the fundamental axiom of Peale’s “positive thinking” is one it shares with any number of New Thought philosophies that guarantee their devotees mastery of life: we create reality.

It is doubtful that Nietzsche would have appreciated the connection – he has already been misappropriated many times - but what we have here, I think, is a vulgarised expression of his insight into the “false” or at least interpretive character of facts. As I’ve pointed out, this had been a mainstay of the philosophers who followed Nietzsche’s lead, but their influence was mainly limited to the academic or cultural world, and had little effect on the man or woman in the street. But with the advent of “post-truth” and “alternative facts,” the notion that facts are really interpretations of reality that enable us to manipulate it to our best advantage, has taken centre stage.

Let me say again that Trump mostly likely never heard of Nietzsche and the metaphysics that troubled him on his mountain top, nor of the philosophical gullies and crevices through which it trickled down to reach our TV sets and Twitter feeds today. But it seems that he has unwittingly but cannily taken advantage of the epistemological vacuum that has come in postmodernism’s and deconstructionism’s wake. And so far, nothing has stopped his creative use of truth and reality, because truth and reality have been denuded of any power to do so, courtesy of their being made redundant.

I should also mention that in Dark Star Rising I also show how there is reason to believe that Trump supporters with a taste for a punked-up form of “positive thinking,” what is known as “chaos magick”, used the internet itself in order to help him into office, enabling the representation of reality to become the genuine article. I cannot tell that story here – readers can find it in the book – but like “positive thinking” and postmodernism, the fundamental belief at the heart of chaos magic is that reality is malleable[44]. It is up for grabs. I also suggest in the book that, although he most likely never heard of chaos magick, Trump seems to have a natural affinity for it. If nothing else, he certain enjoys creating chaos.

And Now?

So where do we go from here? For one thing we can go back to Nietzsche and look at the strategy he proposed to help his readers get past the wasteland of nihilism.[45] He saw it coming. We are in it. Remember that he wrote for the day after tomorrow, which, I suggest, means us. He knew that it would be no picnic and that it might take centuries for the fallout from the death of God – or any other external source of meaning and purpose – to settle and allow any kind of creative response to arise. We need not accept his schedule and there is no time like the present. And while the death of God may not trouble us in the same way that it did an earlier generation – we are content to announce his probable non-existence on bus hoardings – the spiritual vacuum it created remains.[46]

Yet we too can be “untimely men” and recognise that the fact that a popular form of nihilism informs our culture means that those of us who are aware of this are already to some degree beyond it, in the sense that a person who knows he is ill has a better chance of getting better than one who doesn’t. And in fact there is a whole body of work aimed at doing precisely this, coming from a variety of sources. I have written about some of it in my books.[47] So the situation may not be as bad as it sounds. Nietzsche was not the only one who sought a “revaluation of values.” Others did too. When Nietzsche’s madman announced the death of God, he soon realised that he had come too early. Although the deed was done, there were few who were ready to appreciate what it truly meant. We don’t need a madman in a marketplace announcing the death of nihilism. But it could be that its demise is on its way and in some quarters has already taken place. It may only be a matter of time before word of it gets around.

London August 2020

[1] Friedrich Nietzsche The Will to Power translated by Walter Kaufman and R. J. Hollingdale (New York: Random House, 1967) p. 3.

[2] Ibid.

[3] An excellent account of Elisabeth Förster-Nietzsche and her influence on Nietzsche’s posthumous career can be found in H.F. Peters Zarathustra’s Sister (New York: Marcus Wiener Publishing, 1985).

[4] Friedrich Nietzsche Ecce Homo (Harmondsworth, UK: Penguin Books, 1979) p. 33.

[5] Friedrich Nietzsche Twilight of the Idols and The Anti-Christ translated by R.J. Hollingdale (Harmondsworth, UK: Penguin Books, 1977) p. 114.

[6] Nietzsche 1967 p.3.

[7] Nietzsche 1977 p. 114.

[8] Übermensch in German, often mistranslated as “superman.”

[9] Friedrich Nietzsche Thus Spoke Zarathustra translated by R.J. Hollingdale (London: Penguin Books, 1969) p. 47.

[10] Friedrich Nietzsche The Gay Science translated by Walter Kaufmann (New York: Vintage Books, 1974) p. 182.

[11] Ibid.

[12] Jacques Barzun From Dawn to Decadence (New York: Harper Collins, 2000) p. 630.

[13] Gary Lachman The Return of Holy Russia (Rochester, VT: Inner Traditions, 2020) p. 242.

[14] Nietzsche 1967 pp3-4.

[15] Ibid. p. 272.

[16] Henri Bergson Mind-Energy (London: The Macmillan Company, 1920) pp. 47.

[17] Ibid.

[18] George Steiner Lessons of the Masters (Cambridge, MA: Harvard University Press, 2003) p. 83.

[19] Michael Tanner Nietzsche (Oxford: Oxford University Press, 1994) p. 5

[20] We may be allowed to ask if the fact that Nietzsche’s sister was an enthusiastic supporter of National Socialism and that her version of The Will to Power was the one promoted by Nazi hacks has any relation to the fact that Heidegger was an early Nazi enthusiast as well, although he lost his taste for National Socialism fairly quickly, becoming a philosophical persona non grata for Hitlerites by early 1934.

[21] Martin Heidegger The Question Concerning Technology translated by William Lovitt (New York: Harper Perennial, 1977) pp.53-112.

[22] I should point out that Nietzsche took Being as another of the falsifications we imposed on reality, which for him is in a state of constant becoming, a Heraclitean flux rather than a Parmendian stasis. It is, we can say, the fundamental error that makes life liveable, “the supreme will to power.”.Nietzsche 1967 p. 330

[23] I should add that there is good reason to believe that both created difficulties for their readers as a kind of “teaching strategy.”

[24] See Colin Wilson’s long essay “Anti-Sartre” in Below the Iceberg (San Bernardino, CA: Borgo Press, 1998).

[25] See, for example Martin Heidegger Early Greek Thinking: The Dawn of Western Philosophy translated by David Farrell Krell and Frank A. Capuzzi (New York: Harper & Row, 1984).

[26] Martin Heidegger Being and Time translated by John Macquarrie and Edward Robinson (New York: Harper & Row, 1962) p. 44.

[27] Martin Heidegger Basic Writings translated by David Farrell Krell (New York: Harper & Row, 1977) p. 370.

[28] Gottfried Benn Prose Essays Poems various translators (New York: Continuum, 1987) pp. 17-25. Heidegger was a reader of Benn’s poetry; like Heidegger, Benn was an early enthusiast for National Socialism, but again like Heidegger, by 1934 he had changed his mind.

[29] Gary Lachman The Secret Teachers of the Western World (New York: Tarcher Penguin, 2015) p. 223. Meister Eckhart’s focus on what he called Istigkeit, “is-ness” is also very close to Heidegger’s “remembering of Being.” Oddly enough, Aldous Huxley, in The Doors of Perception, his account of his experience under the influence of the drug mescaline, speaks of Istigkeit when trying to communicate the impact of the sheer “isness” of everything he saw. This same “isness” was felt by Sartre, during his own mescaline experience, as threatening. Huxley found it beatific. We can say that in this instance, Huxley was more Heideggerian than Sartre.

[30] J.P. Stern Nietzsche (London: Fontana, 1978) p. 136.

[31] Jean-Paul Sartre Nausea translated by Robert Baldick (Harmondsworth, UK: Penguin Books, 1975) p. 13. I should point out that the “crisis of language” expressed here had already been experienced by the Austrian poet Hugo Von Hofmannsthal and others in fin-de-siècle Vienna. See Hofmannsthal’s “Lord Chandos Letter” in The Lord Chandos Letter and Other Writings translated by Joel Rotenberg (New York: New York Review Books, 2005).

[32] Stern. p. 133.

[33] Friedrich Nietzsche “On Truth and Falsehood in an Extra-moral Sense” translated by Walter Kaufmann in The Portable Nietzsche (New York: Penguin Books, 1977) p. 46-47.

[34] Robert C. Solomon and Kathleen M. Higgins What Nietzsche Really Said (New York: Schocken Books, 2000) p. 42.

[35] By all accounts postmodernism started as a school of architecture. See Robert Venturi, Denise Scot Brown, Steven Izenour Learning From Las Vegas (Cambridge, MA: MIT Press, 1972). The idea was to forget the sleek lines and flat, unornamented surfaces of the Bauhaus modernist style – which was itself a reaction against the over ornamentation of earlier, monumental building – and to take inspiration in the kitschy, over the top, gaudy jumble of styles found in Las Vegas and other “road side attractions” such as 1950s diners. Haughty, high modernism was out, and a more accessible “popular” taste was in.

[36] We should also note that “representation” in the sense of particular groups being equally “represented” in media is also a central motivation. The raison d’être of many programs is precisely that, with plot, narrative and other essentials seemingly present as a vehicle for this. We should also not ignore the narcissism that is flattered by reality television making “you” the star of the show. Celebrities are no different from “us” and “we” should get our fair share of the attention and praise they receive.

[37] In the sense that for Marx, “truths” and “values” were not absolute or objective, but a product of the class war and used by the bourgeoise to keep the workers in place.

[38] Allan Bloom The Closing of the American Mind (New York: Simon and Schuster, 1987). How postmodern Nietzsche really is, is debatable. See Solomon and Higgins pp. 41-43; also Wilson 1998 p. 116. The point made in both is that deconstructionism and postmodernism lack the creative side of Nietzsche’s philosophy. He wanted to “revaluate all values.” Deconstructionism and postmodernism deny the reality of values.

[39] Gary Lachman Turn Off Your Mind: The Mystic Sixties and the Dark Side of the Age of Aquarius (New York: Disinformation Co.) p. 46 on how this related to the general “occult revival” of that decade.

[40] Gary Lachman Dark Star Rising: Magick and Power in the Age of Trump (New York: Tarcher Perigee, 2018) pp. xv-xvi.

[41] Barzun 2000.

[42] Another is Vladimir Putin. See Gary Lachman Dark Star Rising: Magick and Power in the Age of Trump (New York: Tarcher Perigee, 2018) pp. 138-148.

[43] Norman Vincent Peale The Power of Positive Thinking (London: Vermillion, 1990) p. 14. The quotation is actually from the psychiatrist Karl Menninger.

[44] Lachman 2018 pp. 47-49.

[45] And we must remember we are under no obligation to accept his view of things. I personally do not believe that the universe and its inhabitants, ourselves especially, are meaningless. But I understand why Nietzsche did.

[46] https://humanism.org.uk/campaigns/successful-campaigns/atheist-bus-campaign/

[47] Gary Lachman Lost Knowledge of the Imagination (Edinburgh, UK: Floris Books, 2017) and Beyond the Robot: The Life and Work of Colin Wilson (New York: Tarcher Perigee, 2016).